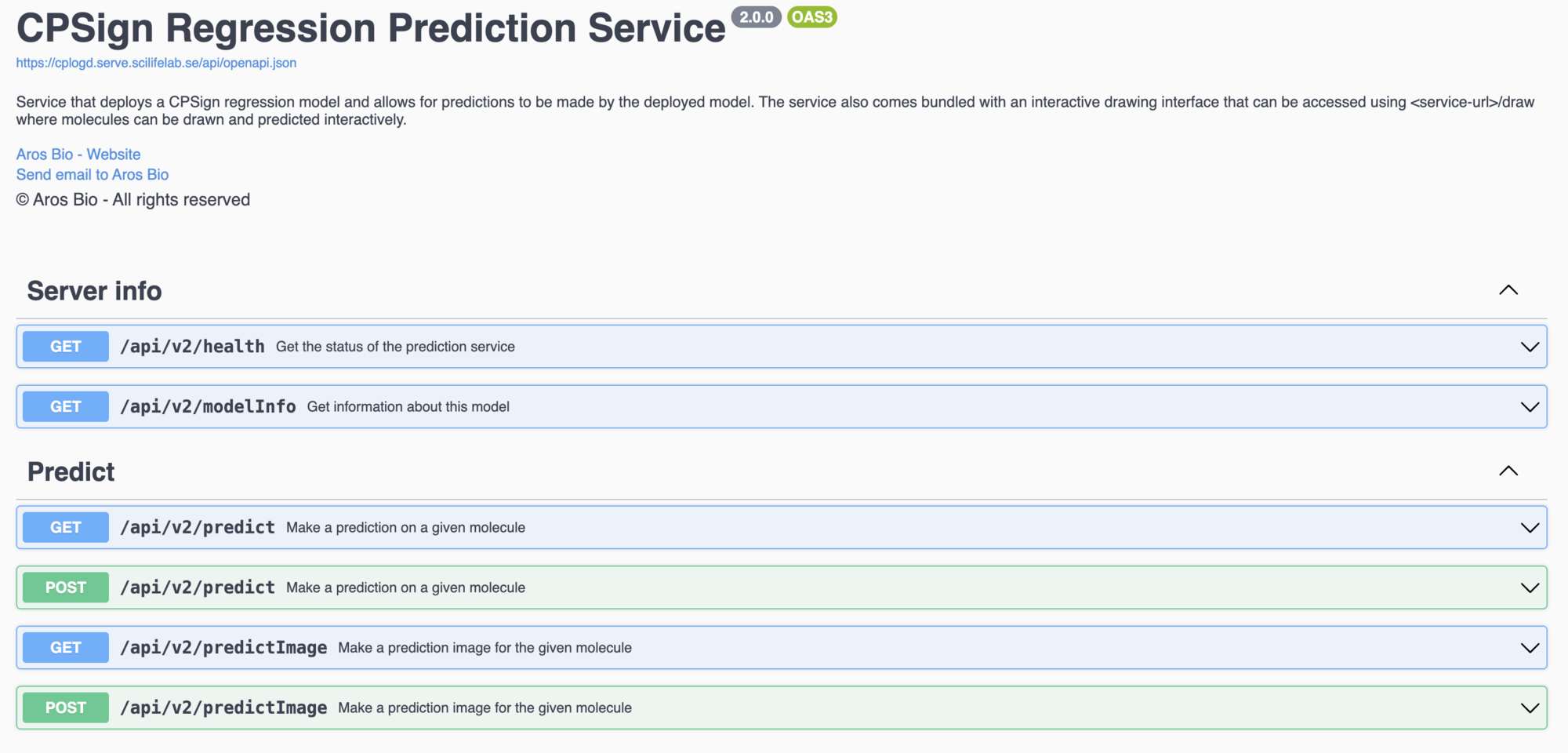

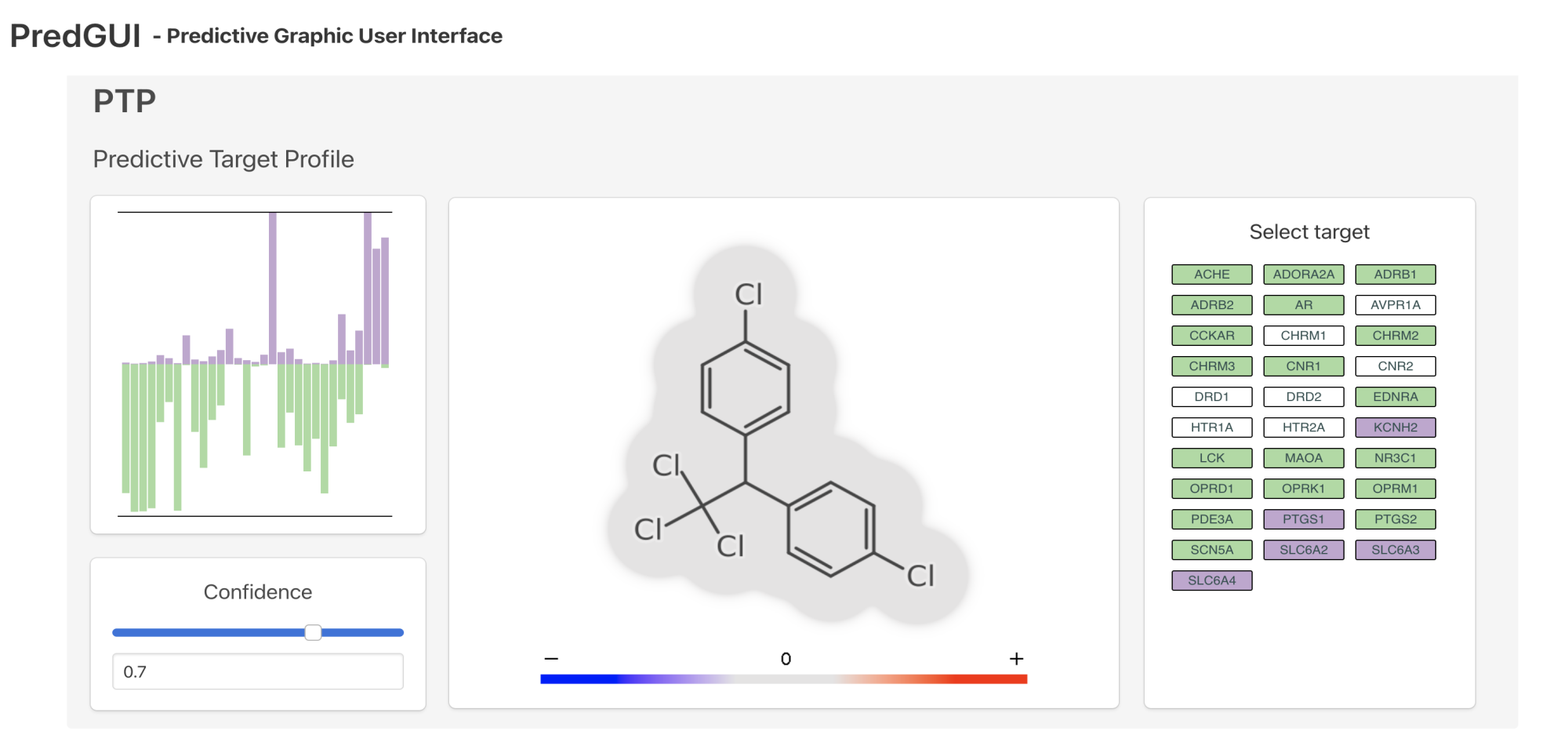

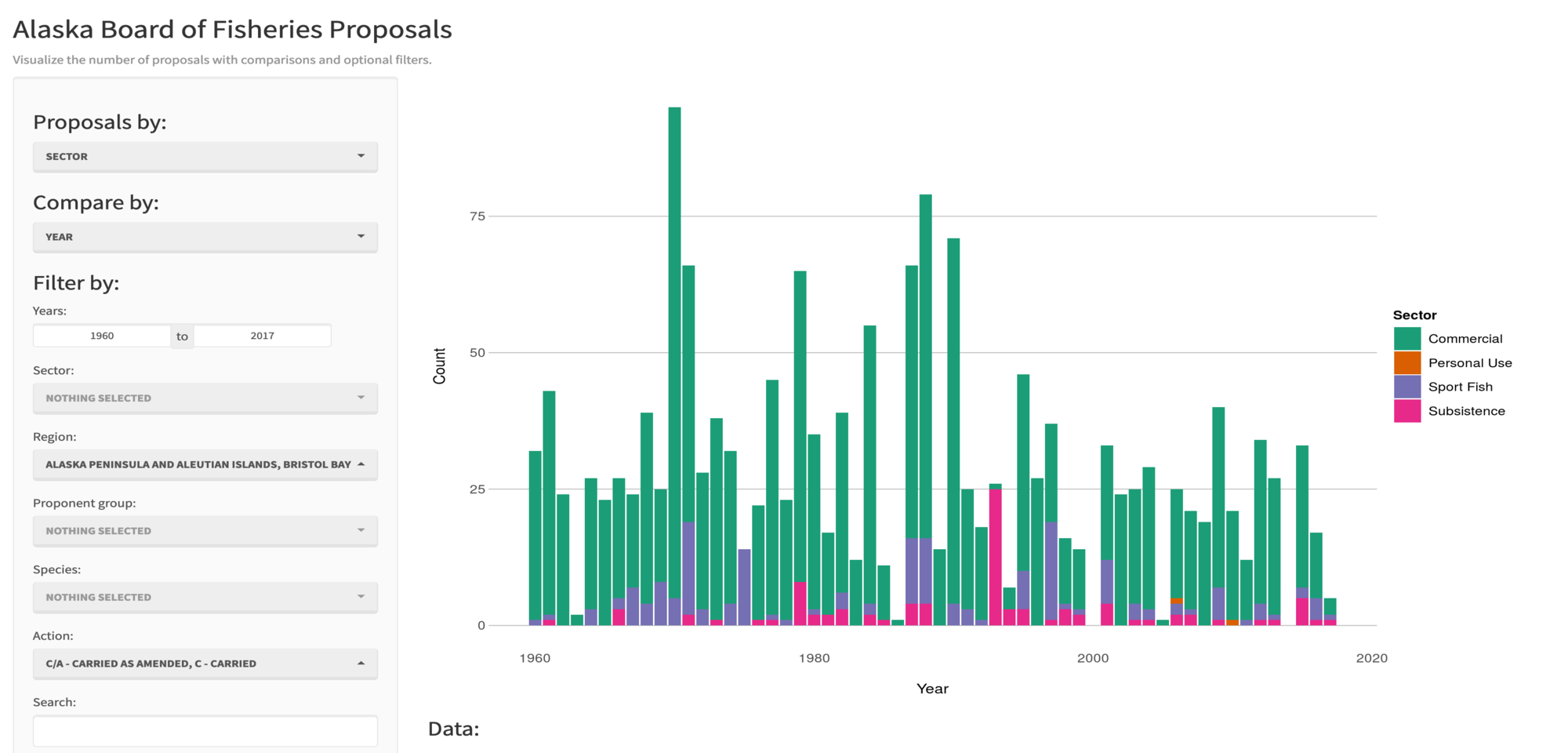

(beta) is a platform offering machine learning model serving, app hosting, web-based integrated development environments, and other tools to life science researchers affiliated with a Swedish research institute.

(beta) is a platform offering machine learning model serving, app hosting, web-based integrated development environments, and other tools to life science researchers affiliated with a Swedish research institute.

Recent updates

Collections

Collections are groups of apps and models published on SciLifeLab Serve belonging to a research community, organization, or topic. Start a new collection?